- Researchers investigated whether retrieval-augmented generation (a method linking AI to specific source documents) accurately provides emergency medical services treatment recommendations.

- This simulation study tested Google's NotebookLM platform using six clinical scenarios and all text-based protocols from a single large emergency system.

- The model correctly recommended 127 of 169 patient care actions, achieving an overall accuracy rate of 75 percent.

- The researchers identified nine major misses and twelve hallucinations (instances where the AI generates false information), though none endangered patient safety.

- The 25 percent error rate indicates that current retrieval-augmented models require significant improvement before supporting real-time clinical decision-making in emergency settings.

Standardizing Decision Support in Prehospital Emergency Care

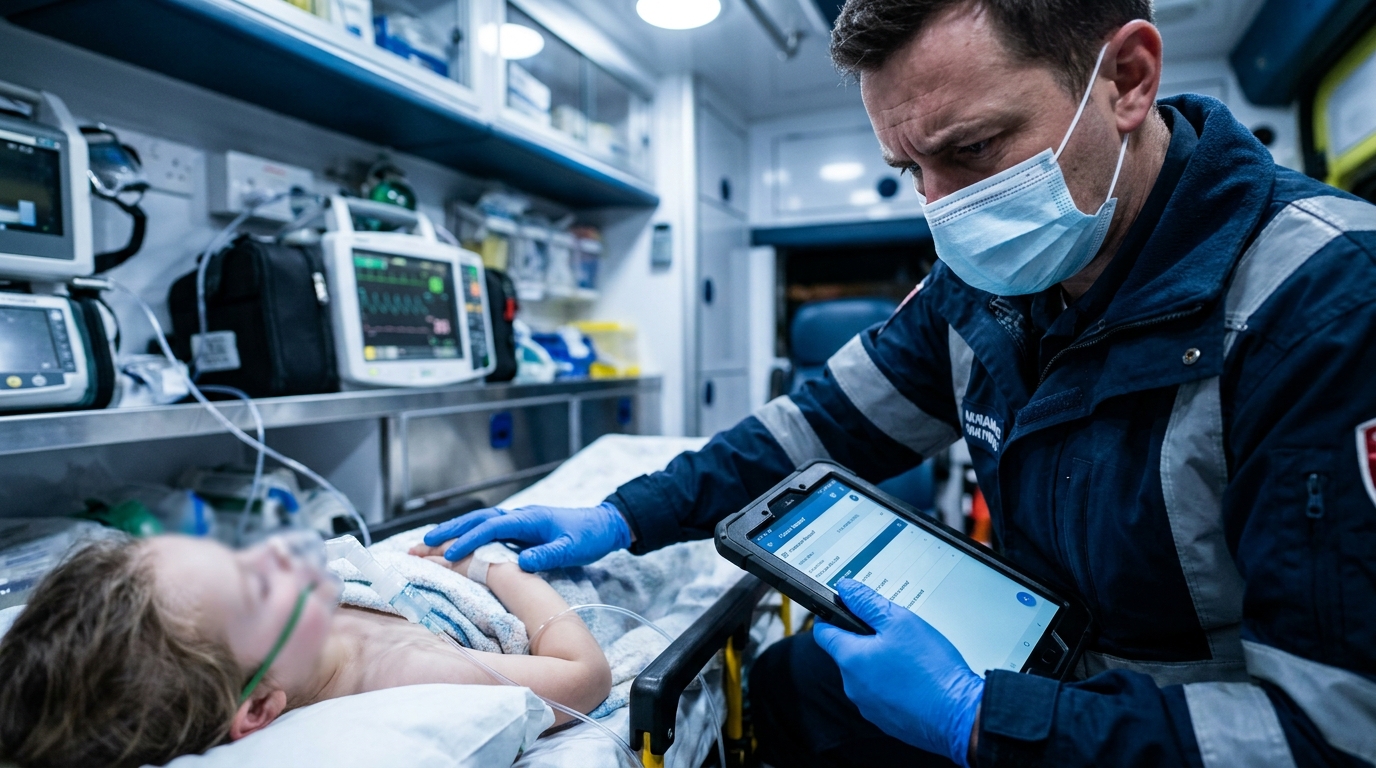

Out-of-hospital cardiac arrest remains a critical challenge for emergency medical services, with global survival rates to hospital discharge hovering around 8.8 percent [1]. Clinical outcomes depend heavily on the rapid implementation of standardized resuscitation protocols, as adherence to updated guidelines significantly improves survival [2]. However, the complexity of managing diverse emergencies, ranging from acute ischemic stroke to pediatric trauma, requires precise execution of agency-specific policies [3, 4]. While interventions like epinephrine increase 30-day survival, they do not always improve favorable neurologic outcomes, underscoring the need for high-fidelity decision support in the field [5]. Variations in regional emergency medical systems further complicate the delivery of consistent, evidence-based care [6]. A new study evaluates whether artificial intelligence can assist clinicians by providing real-time, protocol-grounded recommendations during these high-stakes encounters, potentially offering a tool to reduce cognitive load and standardize prehospital treatment.

Retrieval-Augmented Generation and Protocol Grounding

To evaluate the potential for artificial intelligence to assist in high-pressure clinical environments, researchers conducted a non-human, simulation-based experimental study focused on protocol adherence. The study utilized a large language model employing a retrieval-augmented generation approach. This specific technical framework restricts the artificial intelligence to pull answers only from a provided set of specific sources, rather than relying on its broad pre-existing training data. By using this method, the researchers aimed to minimize the risk of the model generating incorrect or fabricated medical information, a phenomenon known as hallucinations, by confining its knowledge base to verified institutional guidelines. For clinical practice, this means a medical director could theoretically upload their specific regional protocols and receive customized, localized decision support. The experimental setup involved uploading the complete library of text-based policies and treatment protocols from a single large emergency medical services system into Google's NotebookLM platform. This platform utilized the Gemini 2.5 Flash framework to process the uploaded documents and generate grounded responses to clinical prompts. The primary outcome of the study was defined as model recommendation accuracy, representing the percentage of all patient care actions correctly provided in the model's response compared to the established protocols. By testing the model against distinct clinical scenarios, the investigators sought to determine if this system could reliably translate complex institutional policies into actionable guidance for first responders.

Simulated Scenarios and Clinical Grading Criteria

The researchers developed six clinical scenario prompts to evaluate the model's performance across a spectrum of emergency presentations, comprising four adult and two pediatric cases. The adult scenarios included ventricular fibrillation out-of-hospital cardiac arrest, blunt head trauma, stroke, and a hazardous materials exposure mass-casualty incident. To assess the model's utility in more complex pediatric populations, the investigators included scenarios for pulseless electrical activity out-of-hospital cardiac arrest and traumatic penetrating extremity hemorrhagic shock. For each simulation, the team established a specific set of expected patient care actions derived from the existing policies and treatment protocols of the emergency medical services system. To ensure a rigorous evaluation of the output, the investigators categorized these expected actions into three distinct groups: procedures and interventions, medications, and destination guidance. The grading of medication recommendations was particularly granular, requiring the model to provide the correct dose and route for all patients, as well as the correct weight-based dosing for pediatric cases. This level of detail is critical for determining if a large language model can safely handle the high-stakes calculations required in prehospital medicine, where dosing errors can be catastrophic. The evaluation process involved two investigators who independently graded the responses generated by the artificial intelligence. A critical component of this review was the identification of hallucinations, instances where the model generates false information not supported by the provided protocols. When the model failed to provide a required action, the investigators categorized the omission as non-applicable, a minor miss, or a major miss. These designations were based on the specific applicability of the action to the case and the potential safety risk posed to the patient, allowing the researchers to distinguish between benign omissions and those that could lead to clinical harm.

Performance Gaps in High-Acuity Pediatric Resuscitation

The study found that the large language model recommended 127 of 169 patient care actions across the six simulated scenarios, resulting in an overall accuracy rate of 75%. While the model successfully identified three-quarters of the required interventions, the researchers identified 42 total missed actions. These omissions were stratified by clinical severity to determine their potential impact on patient outcomes. Among the 169 total actions, nine (5%) were categorized as major misses, representing failures to provide critical care steps. An additional 13 actions (8%) were classified as minor misses, while 20 actions (12%) were deemed non-applicable to the specific clinical context of the case despite being part of the broader protocol. The most significant clinical vulnerabilities appeared during the management of high-acuity pediatric patients. Specifically, five of the nine major misses occurred during the pediatric out-of-hospital cardiac arrest case. The investigators noted that the majority of these critical errors resulted from the model's failure to prompt for the evaluation of secondary treatable causes, such as hypoxia or electrolyte imbalances, which are essential components of pediatric resuscitation algorithms. Beyond omissions, the researchers also monitored for the generation of fabricated information. They identified 12 hallucinations within the model's responses; however, a safety review determined that none of these 12 identified hallucinations were judged to endanger patient safety in the context of the simulated emergencies. For practicing clinicians, these findings suggest that while retrieval-augmented artificial intelligence can rapidly synthesize protocol data, it still lacks the comprehensive clinical reasoning required to independently manage complex algorithms like pediatric advanced life support.

References

1. Yan S, Gan Y, Jiang N, et al. The global survival rate among adult out-of-hospital cardiac arrest patients who received cardiopulmonary resuscitation: a systematic review and meta-analysis. Critical Care. 2020. doi:10.1186/s13054-020-2773-2

2. Salmen MR, Ewy G, Sasson C. Abstract 18053: Use of Cardiocerebral Resuscitation or AHA 2005 Guidelines by EMS Improves Survival from Out-of-Hospital Cardiac Arrest: A Systematic Review and Meta-Analysis. Circulation. 2011. doi:10.1161/circ.124.suppl_21.a18053

3. Jauch EC, Saver JL, Adams HP, et al. Guidelines for the Early Management of Patients With Acute Ischemic Stroke. Stroke. 2013. doi:10.1161/str.0b013e318284056a

4. Parvez SS, Parvez S, Ullah I, Parvez SS, Ahmed M. Systematic Review on the Worldwide Disparities in the Frequency and Results of Emergency Medical Services (EMS) and Response to Out-of-Hospital Cardiac Arrest (OHCA).. Cureus. 2024. doi:10.7759/cureus.63300

5. Perkins GD, Ji C, Deakin CD, et al. A Randomized Trial of Epinephrine in Out-of-Hospital Cardiac Arrest. New England Journal of Medicine. 2018. doi:10.1056/nejmoa1806842

6. Gowens P, Smith K, Clegg G, Williams B, Nehme Z. Global variation in the incidence and outcome of emergency medical services witnessed out-of-hospital cardiac arrest: A systematic review and meta-analysis.. Resuscitation. 2022. doi:10.1016/j.resuscitation.2022.03.026